Tools for Thought, Rust

Where is our "bicycle for the mind"?

Tools for Thought

Recently, I’ve been digging into “Tools for Thought”. As awkward and vague as that phrase sounds, the field itself is undergoing a bit of a renaissance.

The thesis behind Tools for Thought is simple: while computers have helped us do many things, the systems for learning and thinking have remained largely unchanged over the last 40 years.

Think about the act of reading a book. You can read a book on a kindle, you can listen to it as an audiobook, or you can order the hardcover copy and have it arrive in days. And yet, the content and structure of books hasn’t really evolved in the last 50 years.

Outside of a handful of specialized use cases, we’re missing tools which help us learn, adapt, and spot connections.

That’s why I’ve been going down the rabbit hole and reading a bunch of the essays published out by Andy Matuschak and Michael Nielsen (frequent collaborators, and the best thinkers in the space). If you’d like to learn more, I’d recommend the essay the two put together on Developing Tools for Thought.

Memory is a choice

The post that originally got me interested in the subject is Michael Nielsen’s writing on Augmenting Long-term Memory.

In the post, Nielsen makes the case that long-term memory is a choice. Practically speaking, we can have “near-perfect” recall just by taking a few steps with spaced repetition. We just have to choose the things we’d like to remember.

If the claim sounds like hyperbole to you, you aren’t alone. It sounded like hyperbole to me too! Just imagine what you could do if you could remember 5-10x the information you encountered in a normal day?!

The first tool that everyone seems to agree is important is a system for spaced repetition.

Spaced Repetition with Anki

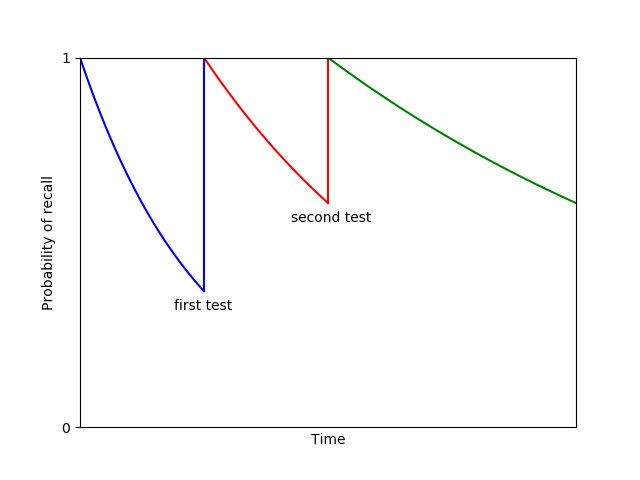

The general idea behind spaced repetition is that at any given time, we are likely in a state of forgetting old information. As time goes on, we’re less likely to remember it.

But when we are forced to recall that information, we remember it a little better. And the curve of forgetting becomes a bit more gradual.

Of course, we can’t recall information right after we’ve learned it. It has to take a little bit of work to pull from our long-term memory into short-term memory.

This is the crux of spaced repetition. Spaced repetition tries to enforce our moment of recall right at the point where we are likely to forget it.

This works practically by writing down things you want to remember, and then using a system to prompt you to recall those things at progressively longer intervals in the future.

The most popular system people seem to use is called Anki. It functions pretty much like any other flashcard app, except that it will ask you for a given card on a steadily increasing cadence.

Here’s an example I put together from one of my slide decks…

Anki is super flexible, which means that you have to be a bit disciplined about how you use it. The general recommendation is to not use many of the fancy features, and instead just focus on the habit of writing good questions and answers.

A good rule of thumb is that you should be recording 2-3 items for everything you read that you’d like to remember. The long-term memory post has a bunch of good tactical tips around them, so I’d recommend checking it out if you’re serious about it.

I have to admit that I was a little skeptical of using flashcards for anything when I first started doing spaced repetition. I’ve never been particularly into either flash cards or memorization before, since I feel like I can always look up what I need to.

The key “a-has” that changed my mind are…

you have to care about the things you want to remember: you don’t have to remember everything. If there’s some field you don’t care about, don’t add it to your spaced repetition system. People often say “spaced repetition didn’t work for me” because at the end of the day, they didn’t care about remembering the field they were learning.

remembering something doesn’t take much time: with a spaced repetition system, you will probably spend something like ~5 minutes over the course of a lifetime to remember a given item. It’s a very low-cost effort, provided the tools are good.

repetition forms the “building blocks” of larger concepts: one thing that held me back from exploring spaced repetition is that remembering things seemed low-value. Why should I bother, when I can just look it up? I’ve now come around to the idea that repetition gives you a fluency with subject matter, just like if you were learning a language. take for example trying to read a french novel by only having a language translation app. sure, you could look up each new french word, but that will make reading anything longer than a few sentences a massive slog.

From what I can tell so far, the system really works for committing anything to memory. I’m trying to build the practice on everything from books I’ve read to programming languages.

Roam Research

The second tool that I’ve picked up is Roam Research, on the recommendation of a few friends who use it. What makes Roam interesting is that it explicitly helps you link together different thoughts, sort of like a wiki page.

Rather than explain it, I think it’d be easier to show you.

Each day starts with a set of Daily notes. Here’s an example of my daily notes from this past weekend’s reading.

Using these tags, I can search for everything with a hashtag, or any of the [[Items]] to click into a new page. I’ve tried to take a system of making each proper noun it’s own page.

The result is that I’m able to see connections I might not have found otherwise! I can connect my ideas temporally to different days. And I can also see which tags connect as part of a larger graph.

I’m viewing this as a habit I’ll try and build upon over the next six months. After all… Roam wasn’t built in a day! 🙃

Where are the commercial offerings?

The biggest question I have is why these sorts of systems aren’t more popular. And why hasn’t there been a big commercial interest in it?

We’ve funded all sorts of social networks, mobile apps, and even enterprise tools. But it feels like we’re still missing a big gap around how people learn, think, and remember. It’s so fundamental to most of the work I’ve seen over the course of my career, I’m a bit baffled that the tools aren’t better.

There’s some great examples at the end of the essay How can we develop transformative tools for thought? that explore this very question. Could we see more executable and interactive books written in the next 50 years?

I’m eager to keep trying these out and will report back on what I’ve found.

Adventures in Rust

On the side, I’ve been spending time learning Rust as part of Advent of Code. If you aren’t familiar, Advent of Code is a set of programming problems, published once per day during the month of December. I’ve found it to be a fairly lightweight way to learn a new language.

I’m still new to writing Rust (about two weeks in), so I wanted to capture my thoughts here.

A memory-free C++

One way you might think about Rust is as a more modern replacement for C++. Most of the high performance code you might want to write in a low-level language can easily be built for Rust.

You might be thinking “Okay, but why something that’s not C++?”.

The Big Language Idea behind Rust is that it gets rid of memory allocation and free-ing. It’s a concept Rust calls “Resource Acquisition Is Initialization”. As a programmer, you focus on passing objects around your code, and the compiler ensures that you don’t leak anything out of scope.

In much the same way that Java tried to get rid of any concept of pointers, Rust puts a focus on removing any option the programmer has to do something which isn’t memory-safe. That means no malloc, no free. And hopefully, no memory leaks or buffer overflows. It also means that you avoid having the garbage collection overhead that Go and Java have.

Oh yeah. You can still call C++ code natively.

Aggressively helpful

I’m still early in my journey of feeling comfortable with Rust, but even from a few days I can understand why people like it.

The best part about Rust is the tooling. That may sound strange when talking about a language, but it’s really true. I’ve never seen a language or community that is so aggressively helpful.

To see what I mean, take the act of learning Rust as a newcomer. The Rust website happily points you to three different resources:

The Rust Book — a long-form book that’s really approachable and breaks down all the features of the language

Rust by Example — a set of examples of different code snippets written in idiomatic rust

Rustlings — a set of exercises where you have to get each file compiling and working

It’s pretty clear that the team behind these systems realized different people learn in different ways. Some are more hands-on, while others like to read in-depth descriptions.

As if the docs weren’t aggressively helpful enough… there’s the compiler.

Most times you work with a compiler, it’s bound to give a somewhat cryptic error indicating where the problem is. You go and google it, and then follow a set of StackOverflow links to finally figure out the error.

Rust goes a step further: it gives you advice on how to fix that error too!

The result is that you get these incredible messages telling you where you screwed up, and what’s probably going wrong.

The tooling doesn’t stop there either. Rust ships with Cargo, a built-in package manager designed to work with crates.io, the repository for all Rust crates. The result is that my code feels like it’s quickly becoming idiomatic, without a lot of ceremony.

I wouldn’t call Rust a beautiful language. It doesn’t have the simplicity of Go, or the nice high-level semantics of Python. But that said, there’s one aspect of Rust that I could see myself using a LOT.

It’s the functional .map, .collect, .fold methods. As an example, here’s how to parse string full of integers:

content // take our string .lines() // split into lines .map(|x| x.parse::<i32>().unwrap()) // parse as 32b-int .collect::<Vec<i32>>() // collect into a vecI’m half-convinced that Rust programmers rarely need to write a for-loop, because they can accomplish this with a very simple set of methods.

The one last interesting thing is that I’ve noticed my code really is much more bug-free! I did last year’s Advent of Code as well, and I’d frequently have a subtle bug that caused my answer to be off. Not so this year.

The parts I find confusing

There’s a lot of parts of Rust that just feel clunky when I compare them to Python or Go.

The Kitchen Sink problem — compared to Go, Rust has a *crazy* amount of language features. I’m sure they are useful for various narrow use cases, but it does create a bit of a steep learning curve up-front.

Threading — I’ve re-read the Rust sections on Threading a few different times, but it doesn’t really make sense to me compared with kicking off a go-routine.

Ownership and Borrowing — Because Rust isn’t memory managed, there are additional rules put on the programmer for how variables are used. Generally, this scheme is referred to as Ownership and Borrowing. I’ve found I’ve had to re-read the semantics on ownership many times over. It’s a very powerful concept, but it’s one where the syntax tends to trip me up. It’s a very different approach than any other language I’ve worked with.

Why?

There’s a good part of me that just wanted to learn Rust because, well, why not?

But the bigger reason I’m interested is that Rust compiles natively to WASM. What that means is you can write a Rust library for high-performance work, and then call it from Javascript in the browser!

You could imagine this has all sorts of utility when it comes to building a rendering engine, or loading millions of objects into browser memory. I’m excited to see what sort of speedup it results in.

More on that soon. Happy holidays! :)

Love the topic. Spaced repetition was a revelation for me. Wondered how Anki might be used to memorize something visual, say the segment architecture. Also do tools for memory go down in value as fast access to our "memory" through tools like Roam go up? LT recall aside, love how they are forcing functions for deeper inquiry and synthesis

I started using Roam about three months ago, and their user community (reddit, Medium & youtube) coupled with the release notes has been a big help to navigating a steep learning curve. Curious to hear more about how you're using it and what you think of it, seems like it's a tool that gets more and more powerful the deeper you go.